- Manually clear the runner cache

- Set maximum job timeout for a runner

- Be careful with sensitive information

- Determine the IP address of a runner

- Use tags to control which jobs a runner can run

- Configure runner behavior with variables

- System calls not available on GitLab.com shared runners

- Artifact and cache settings

Configuring runners

If you have installed your own runners, you can configure and secure them in GitLab.

If you need to configure runners on the machine where you installed GitLab Runner, see the GitLab Runner documentation.

Manually clear the runner cache

Read clearing the cache.

Set maximum job timeout for a runner

For each runner, you can specify a maximum job timeout. This timeout, if smaller than the project defined timeout, takes precedence.

This feature can be used to prevent your shared runner from being overwhelmed by a project that has jobs with a long timeout (for example, one week).

On GitLab.com, you cannot override the job timeout for shared runners and must use the project defined timeout.

To set the maximum job timeout:

- In a project, go to Settings > CI/CD > Runners.

- Select your specific runner to edit the settings.

- Enter a value under Maximum job timeout. Must be 10 minutes or more. If not defined, the project’s job timeout setting is used.

- Select Save changes.

How this feature works:

Example 1 - Runner timeout bigger than project timeout

- You set the maximum job timeout for a runner to 24 hours

- You set the CI/CD Timeout for a project to 2 hours

- You start a job

- The job, if running longer, times out after 2 hours

Example 2 - Runner timeout not configured

- You remove the maximum job timeout configuration from a runner

- You set the CI/CD Timeout for a project to 2 hours

- You start a job

- The job, if running longer, times out after 2 hours

Example 3 - Runner timeout smaller than project timeout

- You set the maximum job timeout for a runner to 30 minutes

- You set the CI/CD Timeout for a project to 2 hours

- You start a job

- The job, if running longer, times out after 30 minutes

Be careful with sensitive information

With some runner executors, if you can run a job on the runner, you can get full access to the file system, and thus any code it runs as well as the token of the runner. With shared runners, this means that anyone that runs jobs on the runner, can access anyone else’s code that runs on the runner.

In addition, because you can get access to the runner token, it is possible to create a clone of a runner and submit false jobs, for example.

The above is easily avoided by restricting the usage of shared runners on large public GitLab instances, controlling access to your GitLab instance, and using more secure runner executors.

Prevent runners from revealing sensitive information

You can protect runners so they don’t reveal sensitive information.

When a runner is protected, the runner picks jobs created on

protected branches or protected tags only,

and ignores other jobs.

To protect or unprotect a runner:

Whenever a project is forked, it copies the settings of the jobs that relate

to it. This means that if you have shared runners set up for a project and

someone forks that project, the shared runners serve jobs of this project.

Mentioned briefly earlier, but the following things of runners can be exploited.

We’re always looking for contributions that can mitigate these

Security Considerations.

If you think that a registration token for a project was revealed, you should

reset it. A registration token can be used to register another runner for the project.

That new runner may then be used to obtain the values of secret variables or to clone project code.

To reset the registration token:

From now on the old token is no longer valid and does not register

any new runners to the project. If you are using any tools to provision and

register new runners, the tokens used in those tools should be updated to reflect the

value of the new token.

If you think that an authentication token for a runner was revealed, you should

reset it. An attacker could use the token to clone a runner.

To reset the authentication token, unregister the runner

and then register it again.

To verify that the previous authentication token has been revoked, use the Runners API.

It may be useful to know the IP address of a runner so you can troubleshoot

issues with that runner. GitLab stores and displays the IP address by viewing

the source of the HTTP requests it makes to GitLab when polling for jobs. The

IP address is always kept up to date so if the runner IP changes it

automatically updates in GitLab.

The IP address for shared runners and specific runners can be found in

different places.

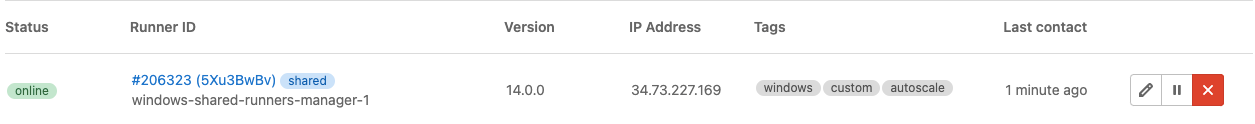

To view the IP address of a shared runner you must have admin access to

the GitLab instance. To determine this:

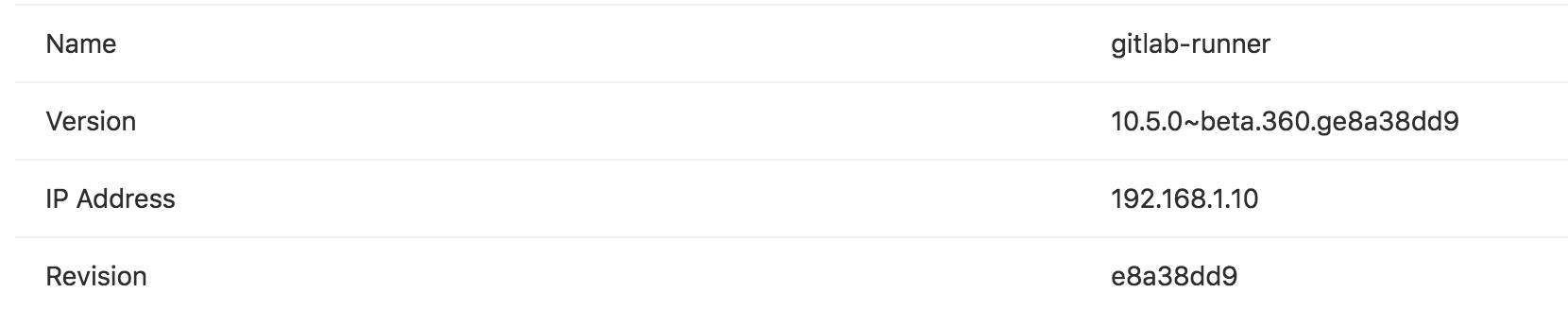

To can find the IP address of a runner for a specific project,

you must have the Owner role for the

project.

You must set up a runner to be able to run all the different types of jobs

that it may encounter on the projects it’s shared over. This would be

problematic for large amounts of projects, if it weren’t for tags.

GitLab CI/CD tags are not the same as Git tags. GitLab CI/CD tags are associated with runners.

Git tags are associated with commits.

By tagging a runner for the types of jobs it can handle, you can make sure

shared runners will only run the jobs they are equipped to run.

For instance, at GitLab we have runners tagged with When you register a runner, its default behavior is to only pick

tagged jobs.

To change this, you must have the Owner role for the project.

To make a runner pick untagged jobs:

Below are some example scenarios of different variations.

The following examples illustrate the potential impact of the runner being set

to run only tagged jobs.

Example 1:

Example 2:

Example 3:

The following examples illustrate the potential impact of the runner being set

to run tagged and untagged jobs.

Example 1:

Example 2:

You can use tags to run different jobs on different platforms. For

example, if you have an OS X runner with tag Introduced in .

You can use CI/CD variables with You can use CI/CD variables to configure runner Git behavior

globally or for individual jobs:

You can also use variables to configure how many times a runner

attempts certain stages of job execution.

You can set the There are three possible values: However, This has limitations when using the Docker Machine executor.

A Git strategy of It can be used for jobs that operate exclusively on artifacts, like a deployment job.

Git repository data may be present, but it’s likely out of date. You should only

rely on files brought into the local working copy from cache or artifacts.

Requires GitLab Runner v1.10+.

The There are three possible values: For this feature to work correctly, the submodules must be configured

(in You can provide additional flags to control advanced behavior using Introduced in GitLab Runner 9.3.

The If set to If Introduced in GitLab Runner 11.10

The If For example:

Use the If For example, the default flags are The configuration above results in Where

Use the Git honors the last occurrence of a flag in the list of arguments, so manually

providing them in You can use this variable to fetch the latest remote The configuration above results in Introduced in GitLab 8.9 as an experimental feature.

You can specify the depth of fetching and cloning using In GitLab 12.0 and later, newly-created projects automatically have a

default If you use a depth of Git fetching and cloning is based on a ref, such as a branch name, so runners

can’t clone a specific commit SHA. If multiple jobs are in the queue, or

you’re retrying an old job, the commit to be tested must be within the

Git history that is cloned. Setting too small a value for Jobs that rely on To fetch or clone only the last 3 commits:

You can set it globally or per-job in the

By default, GitLab Runner clones the repository in a unique subpath of the

The This can only be used when An executor that uses a concurrency greater than The runner does not try to prevent this situation. It’s up to the administrator

and developers to comply with the requirements of runner configuration.

To avoid this scenario, you can use a unique path within The most stable configuration that should work well in any scenario and on any executor

is to use The The value of For example, you define both the variables below in your

The value of Introduced in GitLab, it requires GitLab Runner v1.9+.

You can set the number of attempts that the running job tries to execute

the following stages:

The default is one single attempt.

Example:

You can set them globally or per-job in the GitLab.com shared runners run on CoreOS. This means that you cannot use some system calls, like Introduced in GitLab Runner 13.9.

Artifact and cache settings control the compression ratio of artifacts and caches.

Use these settings to specify the size of the archive produced by a job.

For GitLab Pages to serve

ARTIFACT_COMPRESSION_LEVEL: fastest setting, as only uncompressed zip archives

support this feature.

A meter can be enabled to provide the rate of transfer for uploads and downloads.

You can set a maximum time for cache upload and download with the

If you didn't find what you were looking for,

search the docs.

If you want help with something specific and could use community support,

.

For problems setting up or using this feature (depending on your GitLab

subscription).

Forks

Attack vectors in runners

Reset the runner registration token for a project

Reset the runner authentication token

Determine the IP address of a runner

Determine the IP address of a shared runner

Determine the IP address of a specific runner

Use tags to control which jobs a runner can run

rails if they contain

the appropriate dependencies to run Rails test suites.

Set a runner to run untagged jobs

runner runs only tagged jobs

docker tag.

hello tag is executed and stuck.

docker tag.

docker tag is executed and run.

docker tag.

runner is allowed to run untagged jobs

docker tag.

docker tag defined is executed and run.

docker tag defined is stuck.

Use tags to run jobs on different platforms

osx and a Windows runner with tag

windows, you can run a job on each platform:

windows job:

stage:

- build

tags:

- windows

script:

- echo Hello, %USERNAME%!

osx job:

stage:

- build

tags:

- osx

script:

- echo "Hello, $USER!"

Use CI/CD variables in tags

tags for dynamic runner selection:

variables:

KUBERNETES_RUNNER: kubernetes

job:

tags:

- docker

- $KUBERNETES_RUNNER

script:

- echo "Hello runner selector feature"

Configure runner behavior with variables

GIT_STRATEGY

GIT_SUBMODULE_STRATEGY

GIT_CHECKOUT

GIT_CLEAN_FLAGS

GIT_FETCH_EXTRA_FLAGS

GIT_SUBMODULE_UPDATE_FLAGS

GIT_DEPTH (shallow cloning)

GIT_CLONE_PATH (custom build directories)

TRANSFER_METER_FREQUENCY (artifact/cache meter update frequency)

ARTIFACT_COMPRESSION_LEVEL (artifact archiver compression level)

CACHE_COMPRESSION_LEVEL (cache archiver compression level)

CACHE_REQUEST_TIMEOUT (cache request timeout)

Git strategy

GIT_STRATEGY=none requires GitLab Runner v1.7+.

GIT_STRATEGY used to fetch the repository content, either

globally or per-job in the variables section:

variables:

GIT_STRATEGY: clone

clone, fetch, and none. If left unspecified,

jobs use the project’s pipeline setting.

clone is the slowest option. It clones the repository from scratch for every

job, ensuring that the local working copy is always pristine.

If an existing worktree is found, it is removed before cloning.

fetch is faster as it re-uses the local working copy (falling back to clone

if it does not exist). git clean is used to undo any changes made by the last

job, and git fetch is used to retrieve commits made after the last job ran.

fetch does require access to the previous worktree. This works

well when using the shell or docker executor because these

try to preserve worktrees and try to re-use them by default.

none also re-uses the local working copy, but skips all Git

operations normally done by GitLab. GitLab Runner pre-clone scripts are also skipped,

if present. This strategy could mean you need to add fetch and checkout commands

to your .gitlab-ci.yml script.

Git submodule strategy

GIT_SUBMODULE_STRATEGY variable is used to control if / how Git

submodules are included when fetching the code before a build. You can set them

globally or per-job in the variables section.

none, normal, and recursive:

none means that submodules are not included when fetching the project

code. This is the default, which matches the pre-v1.10 behavior.

normal means that only the top-level submodules are included. It’s

equivalent to:

git submodule sync

git submodule update --init

recursive means that all submodules (including submodules of submodules)

are included. This feature needs Git v1.8.1 and later. When using a

GitLab Runner with an executor not based on Docker, make sure the Git version

meets that requirement. It’s equivalent to:

git submodule sync --recursive

git submodule update --init --recursive

.gitmodules) with either:

GIT_SUBMODULE_UPDATE_FLAGS.

Git checkout

GIT_CHECKOUT variable can be used when the GIT_STRATEGY is set to either

clone or fetch to specify whether a git checkout should be run. If not

specified, it defaults to true. You can set them globally or per-job in the

variables section.

false, the runner:

fetch - updates the repository and leaves the working copy on

the current revision,

clone - clones the repository and leaves the working copy on the

default branch.

GIT_CHECKOUT is set to true, both clone and fetch work the same way.

The runner checks out the working copy of a revision related

to the CI pipeline:

variables:

GIT_STRATEGY: clone

GIT_CHECKOUT: "false"

script:

- git checkout -B master origin/master

- git merge $CI_COMMIT_SHA

Git clean flags

GIT_CLEAN_FLAGS variable is used to control the default behavior of

git clean after checking out the sources. You can set it globally or per-job in the

variables section.

GIT_CLEAN_FLAGS accepts all possible options of the

command.

git clean is disabled if GIT_CHECKOUT: "false" is specified.

GIT_CLEAN_FLAGS is:

git clean flags default to -ffdx.

none, git clean is not executed.

variables:

GIT_CLEAN_FLAGS: -ffdx -e cache/

script:

- ls -al cache/

Git fetch extra flags

GIT_FETCH_EXTRA_FLAGS variable to control the behavior of

git fetch. You can set it globally or per-job in the variables section.

GIT_FETCH_EXTRA_FLAGS accepts all options of the The default flags are:

GIT_DEPTH.

origin.

GIT_FETCH_EXTRA_FLAGS is:

git fetch flags default to --prune --quiet along with the default flags.

none, git fetch is executed only with the default flags.

--prune --quiet, so you can make git fetch more verbose by overriding this with just --prune:

variables:

GIT_FETCH_EXTRA_FLAGS: --prune

script:

- ls -al cache/

git fetch being called this way:

git fetch origin $REFSPECS --depth 50 --prune

$REFSPECS is a value provided to the runner internally by GitLab.

Git submodule update flags

GIT_SUBMODULE_UPDATE_FLAGS variable to control the behavior of git submodule update

when GIT_SUBMODULE_STRATEGY is set to either normal or recursive.

You can set it globally or per-job in the variables section.

GIT_SUBMODULE_UPDATE_FLAGS accepts all options of the

subcommand. However, note that GIT_SUBMODULE_UPDATE_FLAGS flags are appended after a few default flags:

--init, if GIT_SUBMODULE_STRATEGY was set to normal or recursive.

--recursive, if GIT_SUBMODULE_STRATEGY was set to recursive.

GIT_DEPTH. See the default value below.

GIT_SUBMODULE_UPDATE_FLAGS will also override these default flags.

HEAD instead of the commit tracked,

in the repository, or to speed up the checkout by fetching submodules in multiple parallel jobs:

variables:

GIT_SUBMODULE_STRATEGY: recursive

GIT_SUBMODULE_UPDATE_FLAGS: --remote --jobs 4

script:

- ls -al .git/modules/

git submodule update being called this way:

git submodule update --init --depth 50 --recursive --remote --jobs 4

--remote flag. In most cases, it is better to explicitly track

submodule commits as designed, and update them using an auto-remediation/dependency bot.Shallow cloning

GIT_DEPTH.

GIT_DEPTH does a shallow clone of the repository and can significantly speed up cloning.

It can be helpful for repositories with a large number of commits or old, large binaries. The value is

passed to git fetch and git clone.

git depth value of 50.

1 and have a queue of jobs or retry

jobs, jobs may fail.

GIT_DEPTH can make

it impossible to run these old commits and unresolved reference is displayed in

job logs. You should then reconsider changing GIT_DEPTH to a higher value.

git describe may not work correctly when GIT_DEPTH is

set since only part of the Git history is present.

variables:

GIT_DEPTH: "3"

variables section.

Custom build directories

$CI_BUILDS_DIR directory. However, your project might require the code in a

specific directory (Go projects, for example). In that case, you can specify

the GIT_CLONE_PATH variable to tell the runner the directory to clone the

repository in:

variables:

GIT_CLONE_PATH: $CI_BUILDS_DIR/project-name

test:

script:

- pwd

GIT_CLONE_PATH has to always be within $CI_BUILDS_DIR. The directory set in $CI_BUILDS_DIR

is dependent on executor and configuration of runners.builds_dir

setting.

custom_build_dir is enabled in the

runner’s configuration.

This is the default configuration for the docker and kubernetes executors.

Handling concurrency

1 might lead

to failures. Multiple jobs might be working on the same directory if the builds_dir

is shared between jobs.

$CI_BUILDS_DIR, because runner

exposes two additional variables that provide a unique ID of concurrency:

$CI_CONCURRENT_ID: Unique ID for all jobs running within the given executor.

$CI_CONCURRENT_PROJECT_ID: Unique ID for all jobs running within the given executor and project.

$CI_CONCURRENT_ID in the GIT_CLONE_PATH. For example:

variables:

GIT_CLONE_PATH: $CI_BUILDS_DIR/$CI_CONCURRENT_ID/project-name

test:

script:

- pwd

$CI_CONCURRENT_PROJECT_ID should be used in conjunction with $CI_PROJECT_PATH

as the $CI_PROJECT_PATH provides a path of a repository. That is, group/subgroup/project. For example:

variables:

GIT_CLONE_PATH: $CI_BUILDS_DIR/$CI_CONCURRENT_ID/$CI_PROJECT_PATH

test:

script:

- pwd

Nested paths

GIT_CLONE_PATH is expanded once and nesting variables

within is not supported.

.gitlab-ci.yml file:

variables:

GOPATH: $CI_BUILDS_DIR/go

GIT_CLONE_PATH: $GOPATH/src/namespace/project

GIT_CLONE_PATH is expanded once into

$CI_BUILDS_DIR/go/src/namespace/project, and results in failure

because $CI_BUILDS_DIR is not expanded.

Job stages attempts

variables:

GET_SOURCES_ATTEMPTS: 3

variables section.

System calls not available on GitLab.com shared runners

getlogin, from the C standard library.

Artifact and cache settings

CACHE_REQUEST_TIMEOUT setting.

This setting can be useful when slow cache uploads substantially increase the duration of your job.

variables:

# output upload and download progress every 2 seconds

TRANSFER_METER_FREQUENCY: "2s"

# Use fast compression for artifacts, resulting in larger archives

ARTIFACT_COMPRESSION_LEVEL: "fast"

# Use no compression for caches

CACHE_COMPRESSION_LEVEL: "fastest"

# Set maximum duration of cache upload and download

CACHE_REQUEST_TIMEOUT: 5

Variable

Description

TRANSFER_METER_FREQUENCY

Specify how often to print the meter’s transfer rate. It can be set to a duration (for example, 1s or 1m30s). A duration of 0 disables the meter (default). When a value is set, the pipeline shows a progress meter for artifact and cache uploads and downloads.

ARTIFACT_COMPRESSION_LEVEL

To adjust compression ratio, set to fastest, fast, default, slow, or slowest. This setting works with the Fastzip archiver only, so the GitLab Runner feature flag FF_USE_FASTZIP must also be enabled.

CACHE_COMPRESSION_LEVEL

To adjust compression ratio, set to fastest, fast, default, slow, or slowest. This setting works with the Fastzip archiver only, so the GitLab Runner feature flag FF_USE_FASTZIP must also be enabled.

CACHE_REQUEST_TIMEOUT

Configure the maximum duration of cache upload and download operations for a single job in minutes. Default is 10 minutes.

Help & feedback

Docs

Edit this page

to fix an error or add an improvement in a merge request.

Create an issue

to suggest an improvement to this page.

Product

Create an issue

if there's something you don't like about this feature.

Propose functionality

by submitting a feature request.

to help shape new features.

Feature availability and product trials

to see all GitLab tiers and features, or to upgrade.

with access to all features for 30 days.

Get Help